22.1 Primary Pattern

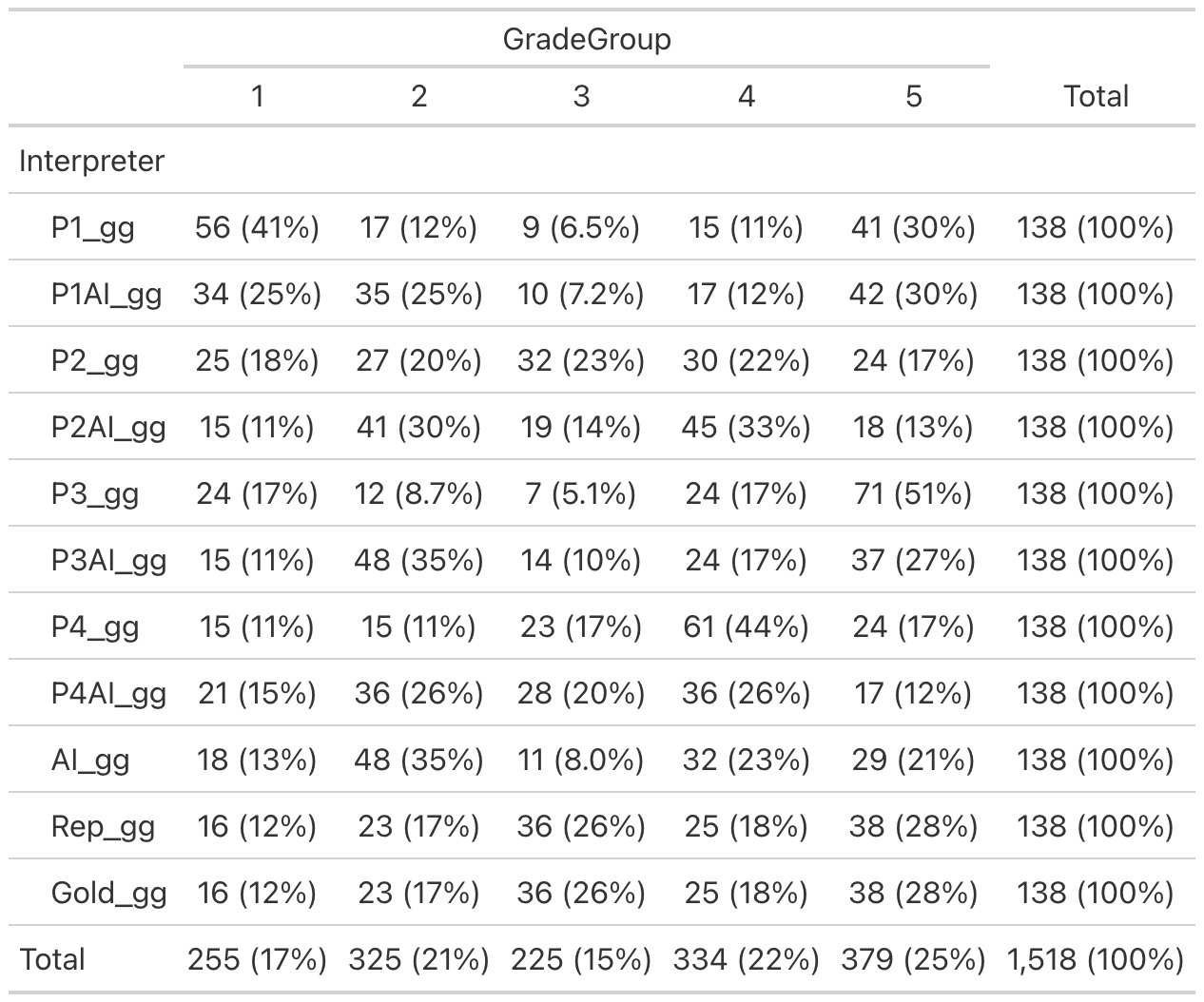

Note for Pathologists: Primary Gleason Pattern distribution across all interpreters (Pathologists with/without AI, AI model, Report, and Reference Diagnosis).

Pattern

|

Total | |||

|---|---|---|---|---|

| 3 | 4 | 5 | ||

| Interpreter | ||||

| P1_p | 74 (54%) | 33 (24%) | 31 (22%) | 138 (100%) |

| P1AI_p | 69 (50%) | 51 (37%) | 18 (13%) | 138 (100%) |

| P2_p | 52 (38%) | 70 (51%) | 16 (12%) | 138 (100%) |

| P2AI_p | 56 (41%) | 76 (55%) | 6 (4.3%) | 138 (100%) |

| P3_p | 36 (26%) | 68 (49%) | 34 (25%) | 138 (100%) |

| P3AI_p | 63 (46%) | 68 (49%) | 7 (5.1%) | 138 (100%) |

| P4_p | 30 (22%) | 97 (70%) | 11 (8.0%) | 138 (100%) |

| P4AI_p | 57 (41%) | 73 (53%) | 8 (5.8%) | 138 (100%) |

| AI_p | 67 (49%) | 69 (50%) | 2 (1.4%) | 138 (100%) |

| Rep_p | 39 (28%) | 96 (70%) | 3 (2.2%) | 138 (100%) |

| Gold_p | 39 (28%) | 96 (70%) | 3 (2.2%) | 138 (100%) |

| Total | 582 (38%) | 797 (53%) | 139 (9.2%) | 1,518 (100%) |

22.1.1 Primary Pattern agreement

Note for Pathologists: Inter-rater agreement for Primary Pattern among pathologists (without AI) and the Reference Diagnosis.

INTERRATER RELIABILITY

Interrater Reliability

─────────────────────────────────────────────

Fleiss' Kappa for m Raters

─────────────────────────────────────────────

Subjects 138

Raters 5

Agreement % 31.88406

Kappa 0.4258547

z 20.73969

p-value < .0000001

───────────────────────────────────────────── Primary Pattern agreement:: Pathologists with AI and Gold Standart

Note for Pathologists: Inter-rater agreement for Primary Pattern among pathologists (with AI) and the Reference Diagnosis.

INTERRATER RELIABILITY

Interrater Reliability

─────────────────────────────────────────────

Fleiss' Kappa for m Raters

─────────────────────────────────────────────

Subjects 138

Raters 5

Agreement % 53.62319

Kappa 0.6116531

z 26.47632

p-value < .0000001

─────────────────────────────────────────────

Primary Pattern agreement:: Pathologists no AI and Gold Standart